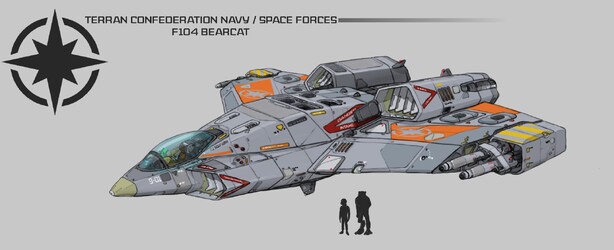

What Exactly Is This Video Enhancing AI?

We've posted a number of fancy video packages this year that feature the enhanced cutscenes ODVS has managed to create. We're careful to not refer to it as just "upscaling" since actual bits of new data are being added to create detail where none existed before. How is this accomplished and what are the limits? What do we mean by saying it's run through an AI routine? ODVS explains all this and more in a new article. You can learn about modern computerized neural networks and all the work ODVS does to manually pre-process the footage before he can turn the crank here.

GIGO – QUALITY IS KINGIn my case, I use a Neural Net-trained application called Topaz Labs Video Enhance AI. The application has been loaded with several AI models that can be applied to source materials of various quality with varying results.

Almost as a case-in-point of why “Artificial Intelligence” is a misleading term, the classic programming adage GIGO – Garbage In, Garbage Out – really applies here. The application itself is not actually intelligent. It can’t look at a piece of footage like we can and think, “oh, that’s a ball. I imagine if it were bigger, the curve around the edge would look like this and the light would fall across it like that”. It’s still software, and software is stupid. It can only do what it’s been specifically programmed to do – it’s just that in this case, it hasn’t been entirely programmed by people.

The result is that you’ll always get better upscale results from higher-quality source footage. Lucky for us that Wing Commander IV’s FMV was all filmed on high-grade 35mm film, under professional lighting and, crucially, eventually released as DVD video. While the resolution of the DVD footage isn’t up to modern standards, the quality and visual fidelity of the footage is relatively high. So GIGO doesn’t apply – our low resolution footage is quite high quality.

Follow or Contact Us